When a tower controller initiates a crash phone alert, every second counts. But critical details — runway, aircraft type, fuel load, souls on board — are delivered verbally, often at a pace that's difficult to catch in the chaos of an emergency response. What if those details were automatically extracted from the audio, transcribed, and displayed on screens throughout the airport before the first ARFF truck even leaves the station?

In a conventional crash phone scenario, the tower controller picks up the phone, presses a button, and speaks. The alert audio broadcasts to ARFF stations, operations, and other connected locations. Firefighters hear the message, gear up, and roll. But if they miss the first few seconds — or if the audio quality isn't perfect — they may head out without key details. The follow-up comes over radio, eating into response time.

The problem compounds when airports need to notify external agencies. Someone has to call mutual aids one by one and manually log into an operations management system like Everbridge or Veoci, type in the event details, and trigger a secondary notification. At many airports, the plane is already on the ground for five minutes or more before that secondary alert goes out.

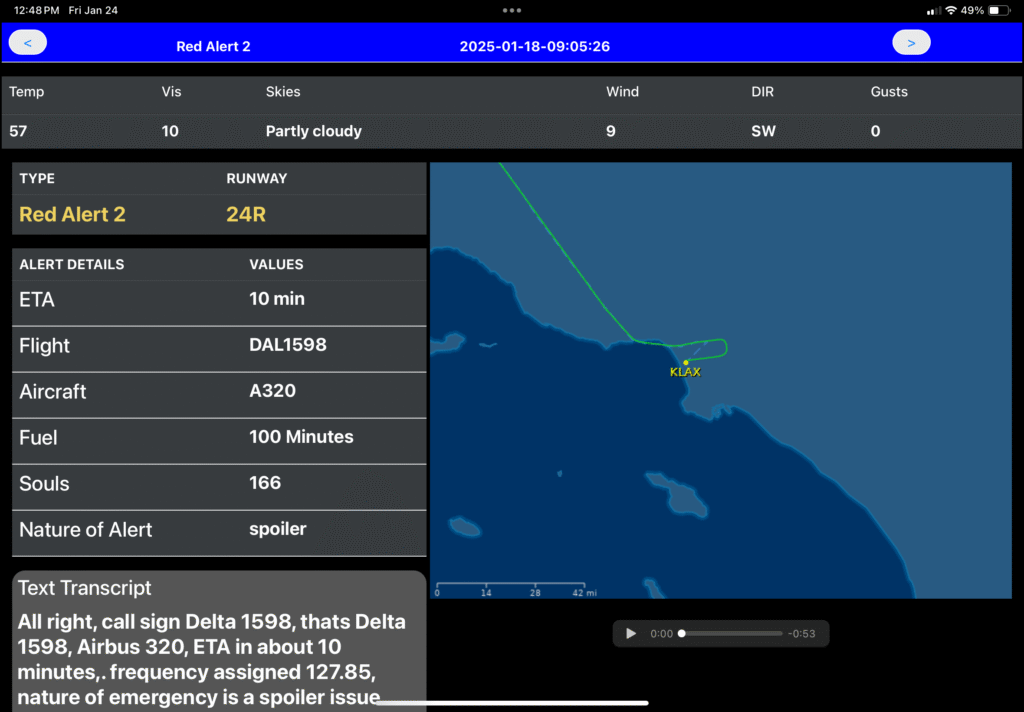

Modern emergency notification systems now incorporate AI-powered speech-to-text capabilities that process the tower controller's spoken alert in real time. The audio is automatically transcribed, and an intelligent extraction engine pulls key data points from the transcript: alert type, runway ID, flight number, aircraft type, ETA, fuel amount, souls on board, and wind conditions.

This extracted data populates digital displays in the ARFF station, operations center, vehicles, and mobile devices within seconds of the alert being initiated. Responders get structured, at-a-glance information — not just replayed audio — before they even leave the building.

The system goes further by cross-referencing the flight number with FlightAware data. If the tower controller says the aircraft is an A320, but the actual transponder data shows an A333, the system pulls the correct aircraft information. It also retrieves real-time wind data and, for active flights, can display the aircraft's current position on a map.

The extracted data doesn't just display on screens — it can be automatically forwarded to external platforms. Through integrations, the system can push structured alert data to Everbridge, Veoci, INDMEX, or similar operations management tools. The integration triggers the alert process within those platforms automatically, populating event parameters without anyone touching a keyboard.

This eliminates the manual data entry bottleneck entirely. The moment the tower controller hangs up the phone, the alert is being broadcast across every connected system. One action in the tower initiates a cascade of notifications — audio to ARFF stations, visual displays with extracted data, push notifications to mobile devices, and automated entries in incident management platforms.

Companion mobile apps for iOS and Android extend the system's reach beyond fixed installations. When an alert is triggered, authorized users receive push notifications on their phones or tablets. The app displays the full transcription, extracted key information in easy-to-read cards, a FlightAware map showing the aircraft's position, and the ability to play back the original alert audio.

This is particularly valuable for personnel who aren't stationed at a fixed location — airport management, mutual aid agencies, or off-duty responders who may need to be recalled. The information reaches them without relying on radio communications or word-of-mouth relay.

The speech-to-text processing can run on-premise, using a physical server installed at the airport, or through a hosted cloud solution.

Both options process the audio with the same speed and accuracy. The choice comes down to your airport's IT infrastructure, security policies, and preference for on-site versus cloud-based processing.

Airports that have deployed these capabilities report measurable improvements. Pre- and post-installation testing at major international airports has documented response time improvements of seventeen seconds or more — a significant margin when FAA guidelines call for ARFF equipment to reach any point on the operational runway within three minutes.

Seventeen seconds might not sound like much, but in emergency response, it can mean the difference between a contained incident and a catastrophic outcome. Automated transcription and data extraction eliminate the gaps where information gets lost, delayed, or miscommunicated.

See more about KEANS speech-to-text crash phone technology or schedule a live demonstration of the KEANS system with KOVA Corp.